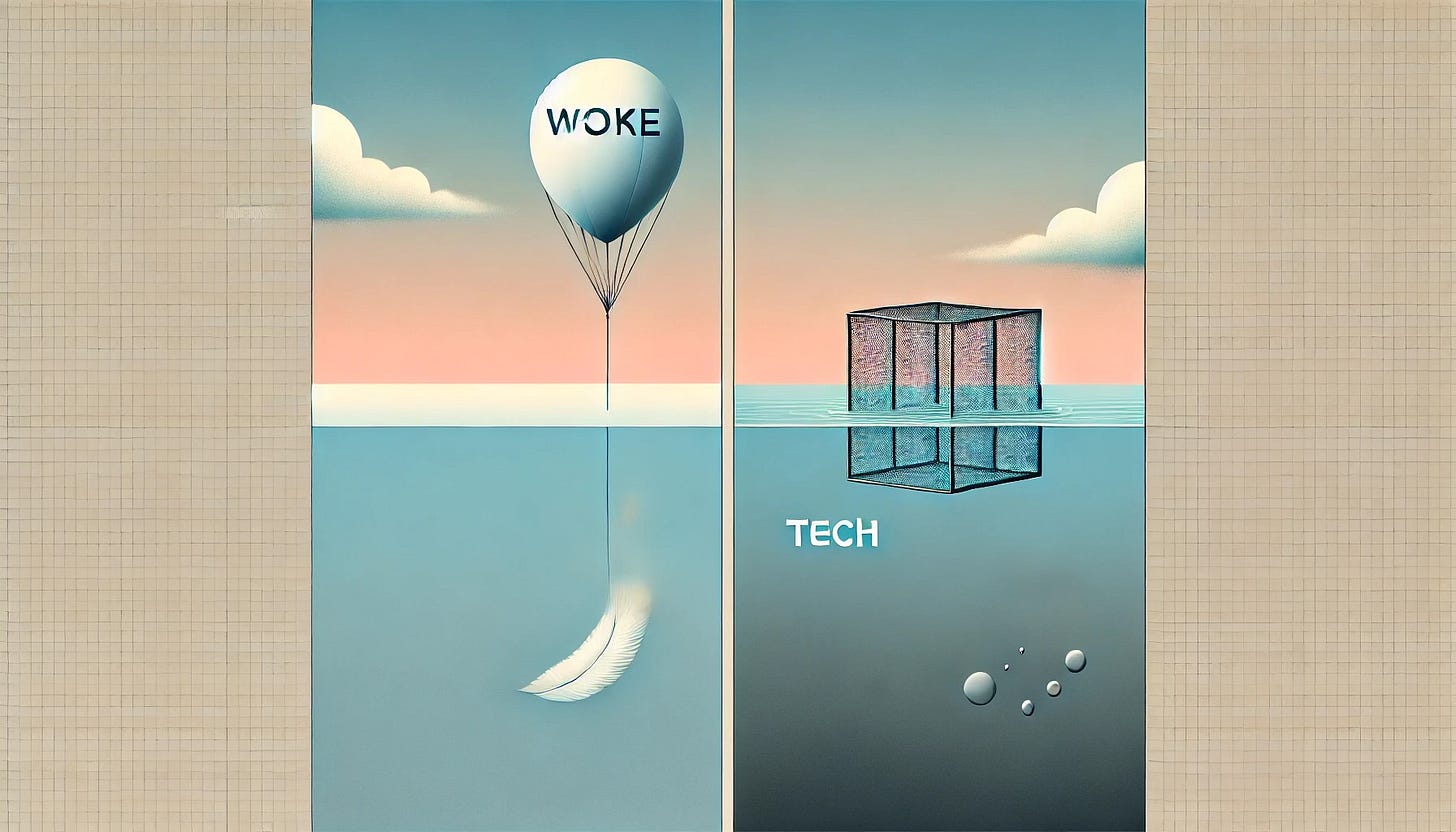

Woke Floats, Tech Sinks: The New Policy Asymmetry -Deep Dive

TL;DR - Prefer the short version? -

In recent years, social policies around concepts like “woke” culture and diversity, equity, and inclusion (DEI) have surged in prominence across institutions. These initiatives are often guided by ethical principles – fairness, equality, justice – and born of moral discussions about historical injustice. Meanwhile, governments on both sides of the Atlantic are scrambling to regulate fast-moving technologies (from social media to artificial intelligence) that many policymakers view as potentially very dangerous. Despite similar ethical underpinnings (protecting people from harm or unfairness), these two domains have starkly different regulatory trajectories. Progressive social policies heavily influence workplace and educational culture with relatively little formal law, whereas technology – especially in the EU – is increasingly hemmed in by detailed legislation. This engaging exploration will examine how “woke”/DEI policies developed with ethical guidelines, and contrast that with the often clumsy attempts of politicians to rein in technology they barely grasp. We’ll compare the toxicity and controversies surrounding each, and how the EU and US approach these issues in markedly different ways.

Origins of Woke and DEI Policies – Ethics and Moral Vision

The rise of DEI and “woke” initiatives can be traced back to mid-20th-century social reforms. In the United States, the landmark Civil Rights Movement – especially the Civil Rights Act of 1964 – laid the legal and moral groundwork by outlawing overt discrimination. In fact, workplace diversity training programs first emerged in the mid-1960s, following new equal employment opportunity laws and affirmative action policies . The ethical vision was clear: to remedy past injustices and ensure individuals from all backgrounds were treated with fairness and dignity. Early corporate diversity efforts often took the form of mandatory trainings and HR policies intended to help employees adjust to newly integrated offices . These policies were explicitly rooted in moral discussions about equality – epitomized by Dr. Martin Luther King Jr.’s ideal that people “not be judged by the color of their skin, but by the content of their character.” Such guiding ethics of color-blind fairness and equal opportunity were the philosophical bedrock supporting early diversity initiatives.

However, implementing these ideals proved challenging. Many first-generation diversity/anti-bias trainings were clunky and ineffective in practice. A Harvard Business Review study found that mandatory bias training sessions (often day-long workshops with do’s-and-don’ts lists) rarely produced lasting change in employee attitudes or company diversity metrics . Some employees even perceived these trainings as overly controlling, prompting backlash or attempts to skirt the rules, ultimately making the exercises counterproductive . In short, simply telling people how to behave “ethically” did not automatically transform organizational culture. This disconnect led to calls for better frameworks and genuine dialogue rather than box-checking compliance.

Ethical guidelines and moral reasoning nonetheless continued to drive the evolution of DEI policies. Over time, many organizations developed codes of conduct and mission statements emphasizing values like inclusivity, respect, and equal dignity for all. For example, one modern approach – Irshad Manji’s “Moral Courage” diversity training – explicitly lists guiding principles such as “no shaming or blaming,” affirming that “no matter what group you’ve been born into, you have equal worth and dignity.” It even broadens the definition of diversity to include diversity of viewpoint, rejecting the notion that individuals of a certain race, gender, or faith all think the same way . These kinds of ethical guidelines underscore that the ultimate goal of “woke”/DEI efforts is to treat people as individuals with inherent worth, creating environments where everyone can thrive. In practice, this has meant initiatives like unconscious bias training (aimed at fairness in hiring), employee resource groups for marginalized communities (fostering belonging), and equity programs to mentor or sponsor under-represented talent. All are grounded in a moral argument: that it is “the right thing to do” to promote inclusion and remedy societal inequities.

From Social Movements to Policy: How “Woke” Went Mainstream

Social movements and moral dialogues have repeatedly pushed “woke” principles from the fringes into official policy. In the late 20th century, affirmative action and equal opportunity laws in the U.S. (and anti-discrimination laws in Europe) turned ideals of racial and gender fairness into concrete mandates. By the 2010s and 2020s, new waves of activism – #MeToo, Black Lives Matter, #StopAsianHate, and more – sparked a renaissance in DEI efforts. For instance, after the murder of George Floyd in 2020 and the worldwide protests against racial injustice that followed, companies and universities rushed to respond to the moral outcry . There was a 123% spike in the creation of DEI-related jobs in the months after the mid-2020 protests, as employers scrambled to revise hiring practices and company culture . Many CEOs publicly pledged to improve diversity metrics, allocated budgets for inclusion programs, and issued heartfelt ethical statements about standing against racism . In short, a “moral reckoning” translated into a rapid expansion of formal diversity policies.

These policies were often supported by both ethical arguments and business logic. Ethically, leaders framed DEI as aligning with their organizational values or corporate social responsibility. Practically, they pointed to evidence (frequently cited from consulting firms) that diverse teams drive innovation and better performance. A well-known McKinsey study, for example, found companies in the top quartile for racial and ethnic diversity were more likely to financially outperform their industry medians . Similarly, research from BCG in 2022 indicated that strong DEI practices correlate with lower employee turnover and higher job satisfaction, presumably because people feel safer and more valued at work . These findings were touted in support of DEI – an appeal to enlightened self-interest alongside moral duty. In both the EU and the US, public agencies and industry groups also issued ethical guidelines to bolster these policies. For example, the European Commission has promoted diversity charters and “gender balance” targets (like the new EU rule requiring 40% women on corporate boards), rooted in principles of equality. In the U.S., professional bodies (like the Society for Human Resource Management) partnered with diversity experts to develop inclusive training curricula that emphasize empathy and mutual respect . All these efforts illustrate how ethics and policy intertwined: public moral pressure led institutions to adopt policies, which were then undergirded by formal guidelines about how to be fair and inclusive.

The result is that by the mid-2020s, “woke” social constructions like DEI have become mainstream in many organizations – albeit under evolving terminology (“diversity and belonging,” “inclusive culture,” etc.). These policies are backed by a mix of ethical conviction (e.g. “we need to do better for historically marginalized groups”) and compliance concerns (avoiding lawsuits or reputational damage for discrimination). Importantly, though, most DEI or woke policies are self-imposed or institutionally driven rather than dictated by hard law. Outside of specific legal requirements (e.g. non-discrimination laws or quotas), much of the woke movement advances through cultural and corporate norms, not government regulation. This feature contrasts sharply with the realm of technology, as we explore next.

Controversies and “Toxicity” in Woke Policies

While rooted in noble ethics, woke/DEI policies have not been free of controversy. Detractors argue that in practice some of these programs became excessively dogmatic or divisive – what critics might label “toxic wokism.” For example, mandatory training sessions that excessively shame or single out certain groups can breed resentment. Even diversity advocates acknowledge that if DEI is “poorly framed or implemented without transparency, it can create resentment” among employees . There have been instances of overzealous activism under the DEI banner: employees or students feeling they must self-censor dissenting opinions, or reports of “cancel culture” where individuals are ostracized for deviating from the new orthodoxy. Some conventional anti-bias workshops have indeed led to “individuals being unfairly singled out, ostracized, and humiliated,” with animosity developing among coworkers – outcomes directly counter to the intended inclusion . In this sense, the ethical aims of woke policies can backfire if handled carelessly, fostering a climate of fear or groupthink instead of open dialogue. This is the toxic side-effect that opponents seize upon.

Conservative and libertarian critics frequently contend that the woke agenda in institutions has become illiberal and coercive. They point out that certain diversity trainings or campus speech codes enforce a rigid ideological line, arguably stifling free expression. As one commentator put it, many DEI programs have turned into “polarizing and toxic” endeavors, sometimes “treat[ing] employees in abusive ways” under the guise of training . On the flip side, defenders of DEI argue that much of the “anti-woke” backlash is itself toxic – a reactionary attempt to roll back civil rights gains. Notably, reformers like Irshad Manji criticize right-wing crusaders who try to ban any discussion of racism or bias; she notes the irony that those preaching “individual liberty” often respond with measures “at least as authoritarian, at least as toxic … as anything they accuse the other side of”, creating a vicious cycle of culture-war conflict .

A key observation is that refereeing these ethical disputes is difficult for governments, because “wokeness” largely concerns speech and values. In liberal democracies, ideological positions – however polarizing – enjoy protection under free speech principles. Thus, unlike regulations on products or finances, regulating an ideology raises constitutional red flags. A case in point: in 2022 Florida attempted to pass the “Stop WOKE Act,” which forbade schools and employers from promoting certain “divisive concepts” derived from critical race theory . The law was meant to curb what the state’s governor called “pernicious ideologies” of the far-left . Yet a federal judge swiftly blocked key portions of this act, noting it turned the First Amendment on its head by letting the state police private speech . The court noted that normally the government cannot censor speech, but here Florida tried to ban private entities from expressing favorable views on “woke” concepts . This ruling underscored that woke policies, even if deemed harmful by some, “easily escape” direct regulation because any such law runs afoul of free speech and academic freedom. In Europe too, there is no equivalent of banning “woke ideology” – debates around “wokism” play out in media and politics, but governments generally focus on outlawing hate speech or discrimination, not mandating or prohibiting progressive values. In summary, the checks on woke policies are largely social, not legal: public opinion, shareholder or donor pressure, and internal leadership choices decide how far an institution goes on DEI. This relative lack of formal regulation means that if a particular woke policy becomes toxic or overreaches, the remedies tend to be internal reforms or public backlash, rather than government intervention.

Politicians vs. Technology: Regulating What They Don’t Understand

In stark contrast to the hands-off approach toward ideological movements, when it comes to technology many lawmakers have been far more eager to step in – even when they don’t fully grasp the tech in question. There is a growing consensus in both the EU and US that Big Tech and AI need oversight, but the competence of regulators is questionable. It has become almost a running joke how out-of-touch some legislators are with modern tech. For example, during a 2021 U.S. Senate hearing, a senior senator famously demanded a Facebook executive “commit to ending Finsta.” He conflated “Finsta” – a slang term for users’ private secondary Instagram accounts – with an official product Facebook could just shut down . The Facebook safety head had to gingerly explain that “Finsta is slang… not a product” – but the senator persisted, insisting the company “end that type of account.” The exchange went viral as an illustration of digital illiteracy in Congress. In another cringe-worthy moment, a Congressman in 2018 berated Google’s CEO about why his Apple iPhone was acting up, betraying confusion about basic tech distinctions . As recently as 2015, influential senators openly admitted they barely used email in their daily lives . These anecdotes would be humorous if the stakes weren’t so high. Critics argue that such officials have “no idea” how the underlying technologies work – yet feel entitled to draft sweeping regulations for them . As a libertarian columnist acerbically observed, “Congress cannot competently regulate things it doesn’t even begin to understand,” and nowhere is this ignorance more pronounced than with Big Tech .

The rapid rise of artificial intelligence has thrown this issue into sharp relief. The overnight sensation of OpenAI’s ChatGPT in late 2022 sparked a regulatory rush – politicians hastily convening hearings and task forces to address AI risks . In the United States, Senate Majority Leader Chuck Schumer held closed-door meetings with tech CEOs about AI, and every single tech leader raised their hand in agreement that government should play a role in regulating AI . This was extraordinary – tech executives practically begging for rules – and it reflects the widespread view that AI poses serious societal risks if left unchecked. From biased algorithms to privacy invasion to hypothetical “rogue AI” scenarios, policymakers hear that AI could be very dangerous. The European Union, true to form, moved even faster: it proposed the EU AI Act back in 2021 (well before ChatGPT’s debut) and by 2023 had a draft comprehensive law for AI oversight . The EU’s approach explicitly uses a risk-based, precautionary framework, imposing strict rules on “high-risk” AI systems and even outright bans on certain harmful uses (like social credit scoring) . This proactive stance is driven by an ethics-first philosophy: European policymakers want “human-centric” AI that respects fundamental rights, and they are willing to regulate aggressively to ensure that . As a result, the EU is often seen as overregulating technology, at least compared to the U.S.

However, this assertive regulation collides with the reality of lawmakers’ limited tech expertise. Regulating AI and digital platforms is inherently challenging – the technology evolves far faster than laws. Governments have “never been able to keep up with technological advances,” and indeed politicians have regularly displayed an inability to understand the very field they seek to control . In the U.S., this has led to a peculiar dynamic: tech CEOs like Mark Zuckerberg or Sam Altman are summoned to testify, effectively educating lawmakers and sometimes even asked to write the rules for their own industry . Aleksandra Przegalińska, an AI researcher, noted that relying on CEOs to tell Congress how to regulate AI is “not necessarily the best way to go,” and that a collective effort including independent experts and civil society is needed . The knowledge gap remains a huge hurdle. There’s a genuine concern that ill-informed regulations could have unintended consequences – either failing to mitigate the real dangers or stifling beneficial innovation.

Tech Overregulation in the EU vs. the US Approach

The European Union has built a reputation as the world’s strictest tech regulator, which some hail as visionary and others criticize as heavy-handed. Beyond the AI Act, the EU enacted GDPR (the General Data Protection Regulation) in 2018, fundamentally changing data privacy worldwide. This pattern of robust tech rules is often explained by the “Brussels effect” – the EU leveraging its large market to set global standards . Underlying this is a philosophical difference: the EU tends to apply the precautionary principle with new technologies, preferring to err on the side of safety and ethics. As one analysis puts it, the EU’s AI regulation is “characterized by a strong precautionary and ethics-driven philosophy,” prioritizing high ethical standards and fundamental rights even at the cost of greater compliance burden . The EU explicitly aspires to project “normative power” through regulatory leadership – showcasing values like privacy, transparency, and human dignity in tech governance .

However, this approach has sparked intense debate about overregulation and its economic downsides. Europe’s tech industry is notably weaker than that of the U.S. or China, and some point fingers at the regulatory climate. Critics emphasize that the EU’s fixation on rules – however commendable in principle – may deepen its industrial weaknesses . By consciously making it harder to deploy certain AI applications, the EU could deter venture investment and drive talented innovators elsewhere . In other words, too much red tape can be toxic in its own way: it might strangle the very innovation Europe seeks, leaving the continent a consumer of foreign tech rather than a creator. EU officials are not unaware of this, and indeed a recent “deregulatory turn” suggests a bit of self-correction . They have loosened some proposed AI rules to boost competitiveness, recognizing that a balance must be struck between excellence and trust. Still, compared to the U.S., the EU remains far more willing to impose sweeping tech mandates.

In the United States, the approach to tech regulation has been comparatively laissez-faire, though this is changing in response to public pressure. Historically, American lawmakers preferred to let tech companies self-regulate, fearing that stringent laws might hinder the dynamism of Silicon Valley. This is why, for example, the U.S. to this day lacks a single federal data protection law equivalent to GDPR – instead relying on a patchwork of state laws and industry self-policing. But with mounting concerns over issues like social media’s impact on mental health, misinformation, and now AI’s unpredictable capabilities, U.S. politicians from both parties are calling for tougher oversight (“Big Tech’s day of reckoning,” etc.). The irony is that these calls come even as many of those politicians display only a shallow understanding of the technologies. The result has been a lot of high-profile hearings and proposed bills, but (as of 2025) relatively few enacted laws specifically targeting AI or algorithms. The U.S. did implement some narrower regulations – for instance, federal guidance on AI ethics for government use, and the Biden Administration pushing voluntary AI safety commitments with leading AI firms – but nothing like the comprehensive regime the EU is constructing . Some observers worry that when U.S. laws do eventually materialize, they might reflect knee-jerk fears or political grandstanding rather than well-calibrated solutions, given lawmakers’ track record.

To sum up the transatlantic contrast: Europe leans toward caution and control, even if the bureaucracy might slow innovation, whereas the U.S. has leaned toward innovation and free rein, even if it means playing catch-up when harms emerge. European tech regulation can feel overzealous to American eyes – for example, classifying and scrutinizing AI systems by risk level, or forcing Big Tech to police content and competition more strictly. American policy (until recently) often assumed the market would correct excesses and that heavy regulation was a last resort. But as AI is increasingly viewed as a transformational and potentially dangerous force (some commentators even invoke doomsday scenarios of AI threatening humanity), the gap between the EU and US approaches may be narrowing. Both are grappling with how to harness innovation without unleashing its dark side, and neither has a perfect answer yet.

A Tale of Two Overreaches: “Woke” vs. Tech Regulation

The parallel tracks of social policy and tech policy reveal an intriguing double standard. In the domain of woke/DEI policies, change has been driven by ethical momentum – moral arguments about social justice – with relatively minimal formal regulation. These policies can certainly become toxic (in terms of workplace climate or public polarization) if overdone, but they are checked mainly by social feedback, not by law. Indeed, when legislatures have tried to intrude (as in Florida’s anti-woke law), they’ve hit legal barriers protecting free speech . Thus, one could say “wokeness escaped regulation” because our legal systems are designed to tolerate even contentious ideas in the public square. This freedom allowed woke ideology to spread quickly through cultural institutions, for better or worse, without an obvious brake except the court of public opinion.

Conversely, in the realm of technology, we see a propensity to overregulate based on fear of the unknown. Powerful technologies like AI are often portrayed in almost apocalyptic terms – as existential threats or uncontrollable forces – leading to intense regulatory responses. Policymakers, especially in Europe, treat tech with a heavy dose of precaution: better to be “safe than sorry.” The ethical impulse here is protective – guarding privacy, safety, democracy itself – and it translates into detailed rules and oversight mechanisms. Yet this earnest drive to “save society from dangerous tech” can become overbearing, especially when those writing the rules don’t fully comprehend the tech. We end up with scenarios like politicians grilling CEOs with misguided questions , or drafting laws that industry experts warn are impractical or stifling. The toxicity in this context is more about regulatory excess – the risk of smothering innovation and competitiveness under a blanket of well-intentioned but cumbersome rules . Europe’s struggle to foster home-grown tech champions is illustrative: some argue that an overly strict regime has “trading away” innovation in exchange for theoretical safety .

Ultimately, both woke policies and tech regulations are attempts to do the right thing – one in the social justice arena, the other in the technological safety arena – but each comes with pitfalls when taken to extremes. Woke policies remind us that ethics can be subjective and enforcement tricky; a policy meant to include can inadvertently exclude or divide if mismanaged. Tech regulations remind us that even well-meaning rules need technical savvy; regulating out of fear without understanding can create more problems than it solves. There is also a noteworthy asymmetry: society seems willing to accept informal governance (through norms and ethics) for big social changes, yet it demands formal governance (laws and regulations) for big technological changes. Perhaps this is because technology’s harms (data breaches, AI errors, etc.) are more tangible and directly attributable to specific actors, whereas social movements are diffuse and rooted in free expression.